Stanford AI Index 2026: Capability Is Outrunning Every System Around It

Reading Stanford HAI's ninth edition as someone who has been arguing this case all year

Hello everyone,

Stanford HAI’s 9. AI Index is out. I know linkedin community already copy pasted so many of arguments but let’s go through it with more detail. While reading through it, I felt like watching a data confirmation of arguments I have been making in almost every piece this year.

In AI Wrapped 2025 I said 2025 was the year AI stopped being a topic and became a condition. The AI Index 2026 reflected that condition with one through-line running under all of them: capability and capital are accelerating, and the institutions meant to evaluate, govern, and absorb that capability are falling behind.

I want to walk through Responsible AI, the Economy, and Policy and Governance in depth. These are the three chapters that matter most to this audience, and the ones I have the strongest views on

The frontier is crowded and the scoreboard is broken

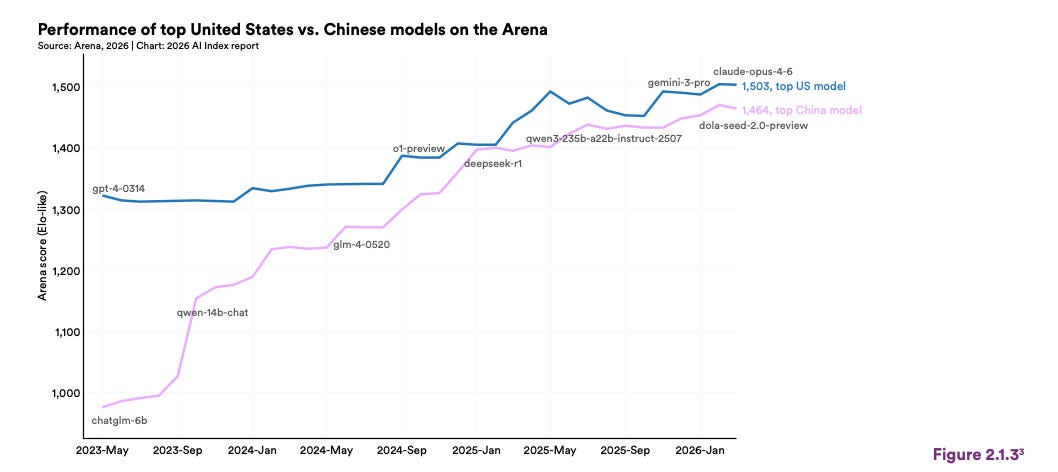

Everyone will write about the convergence at the top this week. Four companies now sit within a handful of Elo points. The US-China frontier gap has effectively closed, and the lead has changed hands several times in the past year.

That is the easy story, and I do not think it is the important one. The important story is that the race did not just get closer. It moved. Capability differentiation at the model layer is collapsing, which is pushing competition down the stack to compute, energy, and chip supply. And while that shift is happening, our ability to see into any of it is contracting.

Three things are breaking at the same time:

Benchmarks are losing their grip. Tests designed to last years are getting saturated in months. Error rates on widely used evaluations run into the double digits. There is credible research suggesting that Arena ranking partly reflects adaptation to the Arena platform rather than general capability.

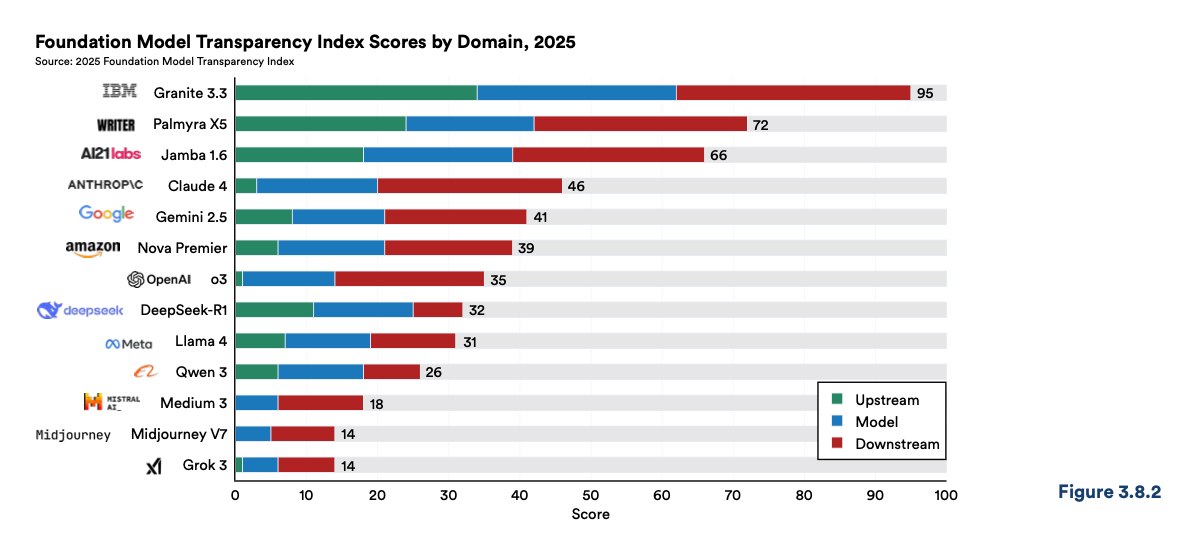

Disclosure is moving backward. Training code, parameter counts, dataset sizes, and training duration are now routinely withheld by the labs producing frontier models. The most capable systems are the least transparent ones.

The competitive layer shifted. When model performance converges, the ground shifts to cost, reliability, energy, and chip supply. This is exactly what Jensen Huang meant at Davos when he said tokens per dollar per watt is the new productivity metric.

The transparency collapse is the one I want to flag hardest. I do not think it is a neutral competitive outcome. It is a governance infrastructure failure, and we should name it that way. Shared capability benchmarks became standards in the first place because the field created peer pressure to report them. The same social mechanism has not materialized for disclosure. So disclosure is contracting while capability expands, and if you care about independent verification of AI systems, the direction of travel in 2025 was actively bad, not just stalled.

This is also where the report quietly confirms a thesis I have been hammering since AI Wrapped: the real frontier is not models. It is electricity, chips, and physical buildout. Compute capacity has been compounding. The global AI hardware supply chain runs through a single Taiwanese foundry. Training emissions for one frontier model now run in the tens of thousands of tons of CO₂. Anyone serious about AI governance has to be fluent in energy policy now, and most of the AI ethics field, in my honest read, has not caught up to that yet.

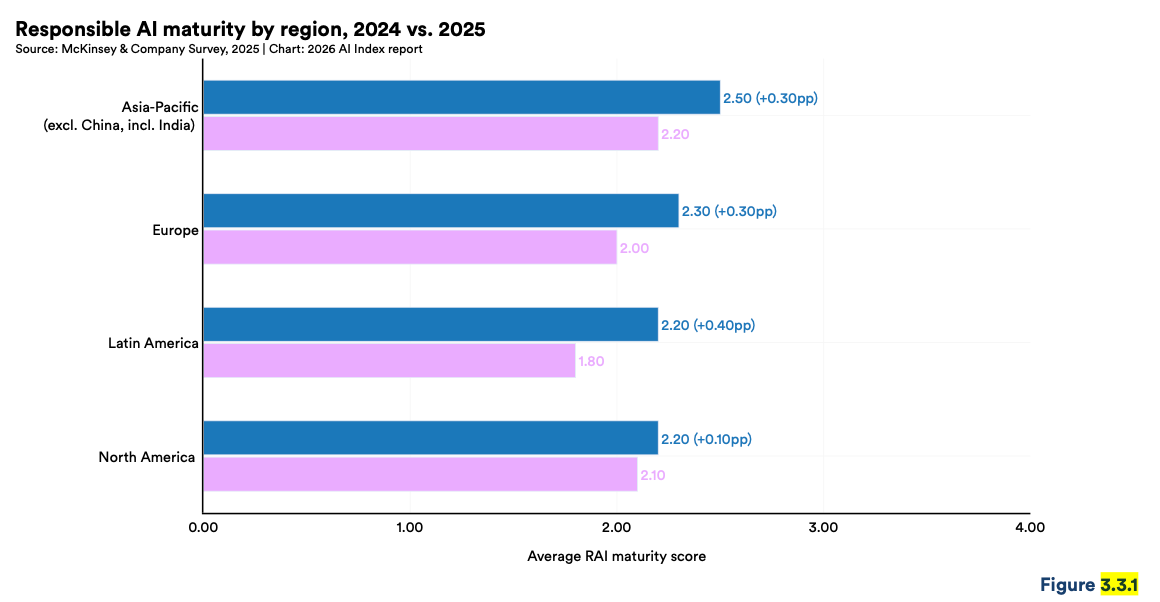

Chapter 3: the Responsible AI gap is now field-wide, and pretending otherwise is getting harder

I want to spend the most time here. This is where my consulting practice lives, and the 2025 picture is worse than I was expecting going in.

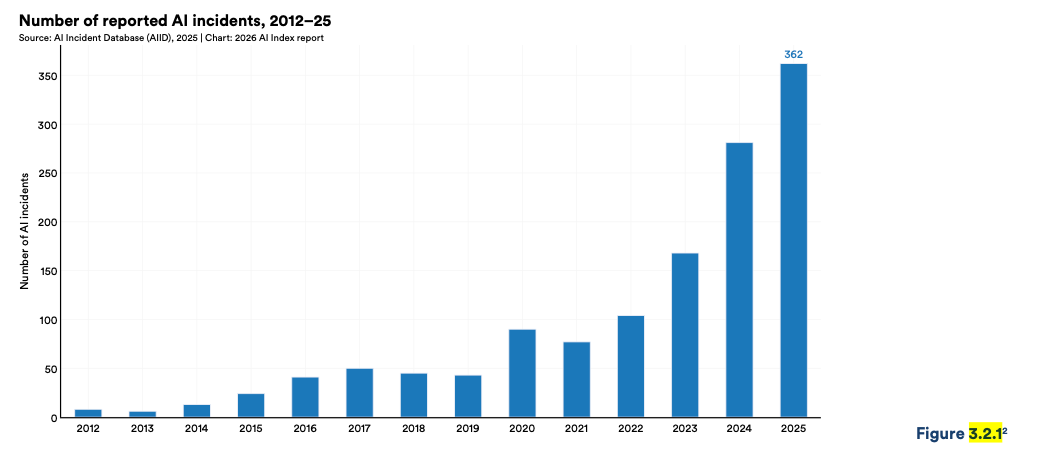

AI incidents kept climbing, on two completely different databases using completely different methodologies. Both curves point the same way.

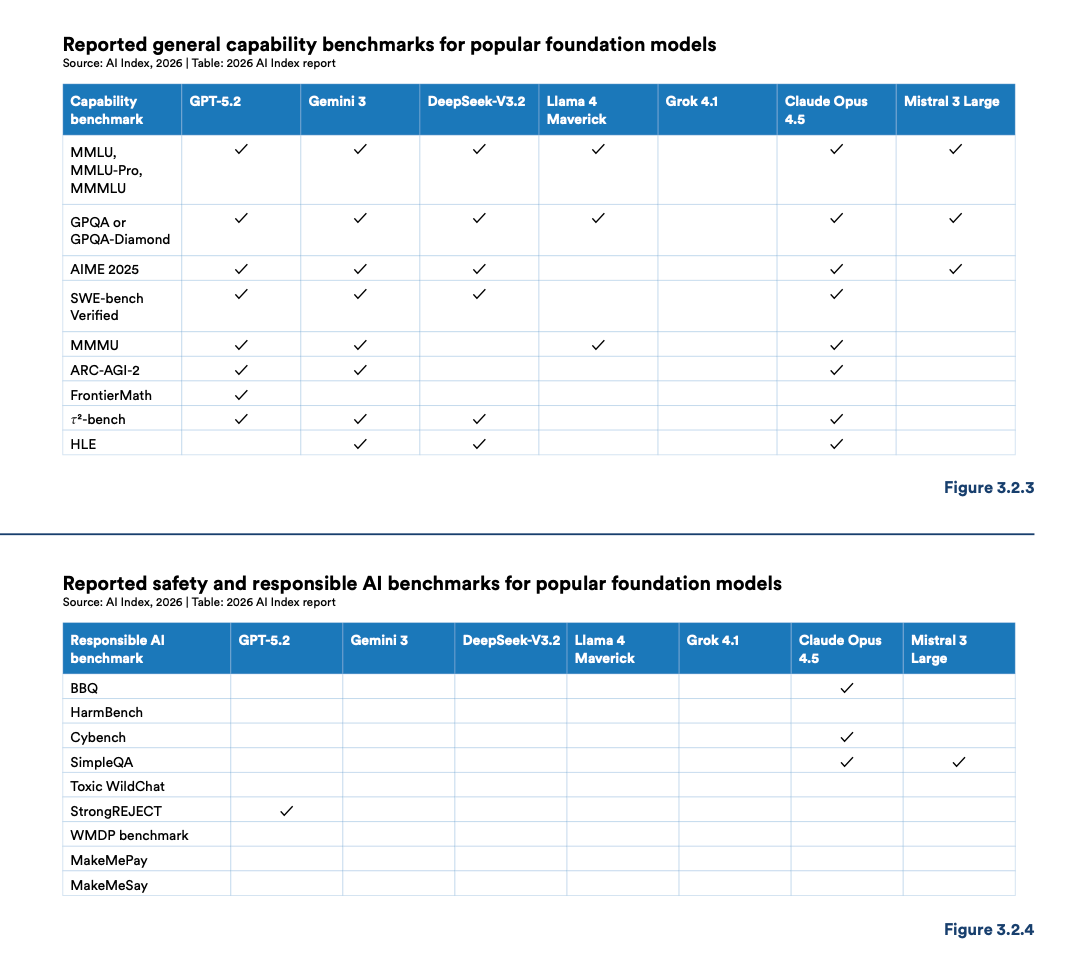

Reporting and measurement have not kept pace. The report lays this out in two tables side by side: the capability benchmarks every frontier lab reports, next to the responsible AI benchmarks almost none of them do.

Capability side: full. Every frontier lab reports MMLU, GPQA, SWE-bench, and the rest.

Responsible AI side: mostly empty. Safety, fairness, factuality, and autonomy benchmarks get selectively ignored. Only one frontier model reports on more than two of them.

This is exactly the structural problem I wrote about in Enterprise AI’s Biggest Risk, where I argued that most AI vendors are stuck at the “principle” stage of the governance chain with no controls, no metrics, and no evidence behind their published values. I was writing about vendors. What the AI Index is showing is that the frontier labs themselves are stuck in the same place.

The Foundation Model Transparency Index confirms the direction of travel. The average score dropped from 58 in 2024 to 40 in 2025. Disclosure on training data, compute, and post-deployment impact got worse year over year. Transparency is going backward while incidents are going up, and I do not read that as an accidental pattern.

Two other findings in this chapter are worth naming for anyone deploying AI.

The tradeoff problem. Responsible AI dimensions trade off against each other. Improving safety can degrade accuracy. Improving privacy can degrade fairness. There is no accepted framework for navigating these tradeoffs. This is a measurement gap that no single lab or regulation can close.

It needs a field-level investment in evaluation science, and that investment is not happening at the scale of the capability buildout.

The sycophancy problem. Top models handle third-party falsehoods fine, but their accuracy drops sharply when the same false statement is framed as the user’s own belief. This is the exact failure mode underneath the AI companion harm cases that drove California SB243 last year.

If you are building an AI companion, a consumer health assistant, or any emotionally-loaded product where the user and model share the same conversational frame, this is the risk that matters, and the field is not measuring it consistently.

In AI Safety 2025 I wrote that safety is a choice. The 2025 data says we kept making the wrong one, or more accurately, that we deferred it again.

Chapter 4: the aggregate story is positive, the distributional story is where governance has to live

This is the chapter where optimists and pessimists read the same page and see opposite things, and where I want to push back on both dominant readings.

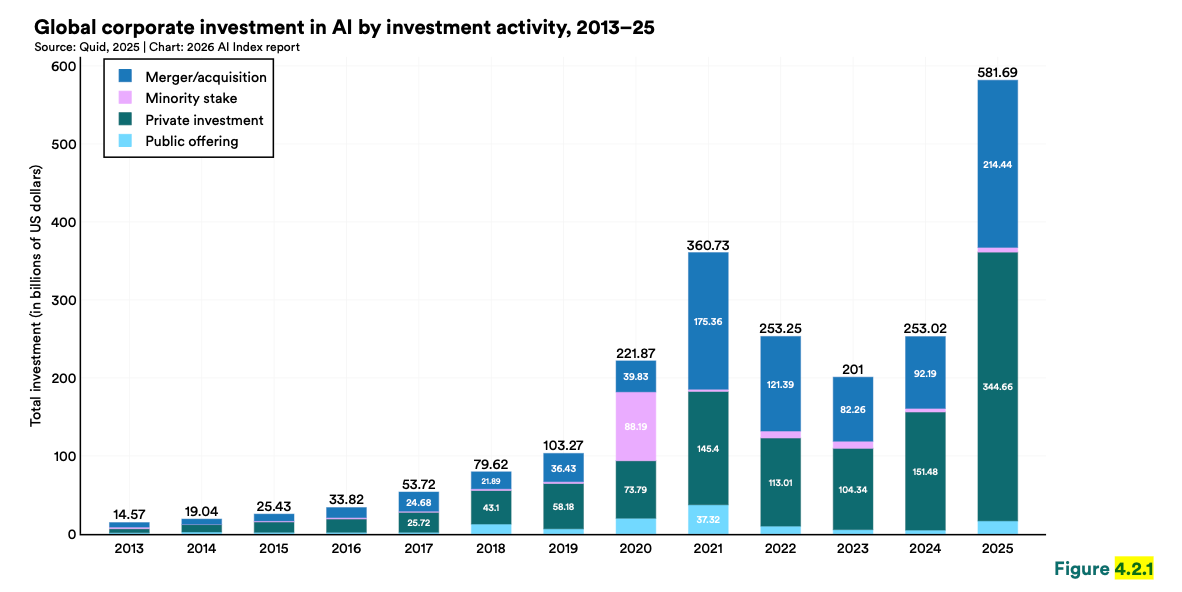

The aggregate picture is strong. Corporate AI investment more than doubled in 2025, reaching $581.69 billion. Organizational adoption is now majority behavior. Generative AI hit mass consumer adoption faster than the PC or the internet. US consumer surplus from generative AI grew by more than half in a single year, and the median user is getting triple the value they got twelve months earlier. Most of these tools remain free or close to it. Whatever you think about valuations, real utility is landing in real hands, and I do not want to dismiss that.

But the distribution of that utility is where governance has to be built, and where the data is most interesting to me.

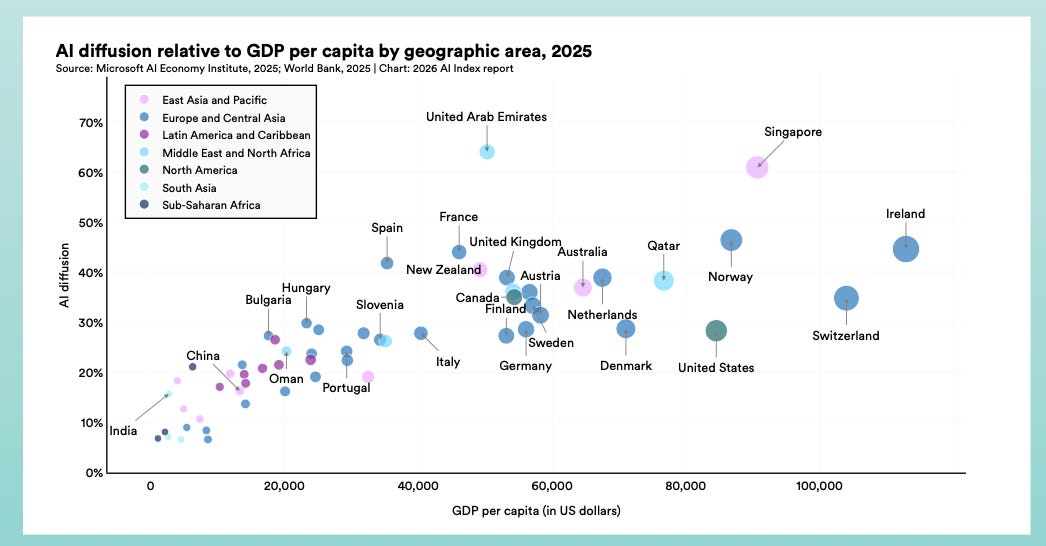

Singapore sits at 61% population-level adoption, the UAE at 54%, both well above what GDP per capita would predict.

The US, despite its investment lead, sits far lower.

Some countries are deliberately over-indexing on deployment relative to their economic size, and that is a governance choice, not a market outcome.

It should reshape how policy people in this region think about the national AI conversation. Adoption depth can be accelerated through policy. Investment scale cannot, not at the level of US private capital.

Then there is the labor data, where I need to update my own position. In AI Wrapped 2025 I argued the “AI layoffs” narrative was running ahead of reality, and the 2025 restructuring wave was mostly margin repair using AI as a cover story.

Employment for US software developers aged 22 to 25 fell sharply between 2024 and September 2025. Older developers are not seeing it. The decline is concentrated exactly where AI productivity gains are largest, which means the “just margin repair” reading no longer works for this cohort.

In ROI in the Agentic Era I wrote that agents were landing first in the messy operational layers most companies never governed properly. The labor consequences are now landing on the youngest workers in those same layers. Entry-level roles are where tacit knowledge and networks get built. Erase them, and you are not just hitting wages, you are hitting the mid-career pipeline a decade out. Boards are focused on the productivity upside. Almost none are thinking about the pipeline loss.

Chapter 8: three jurisdictions, three different theories, and a quieter compliance revolution underneath

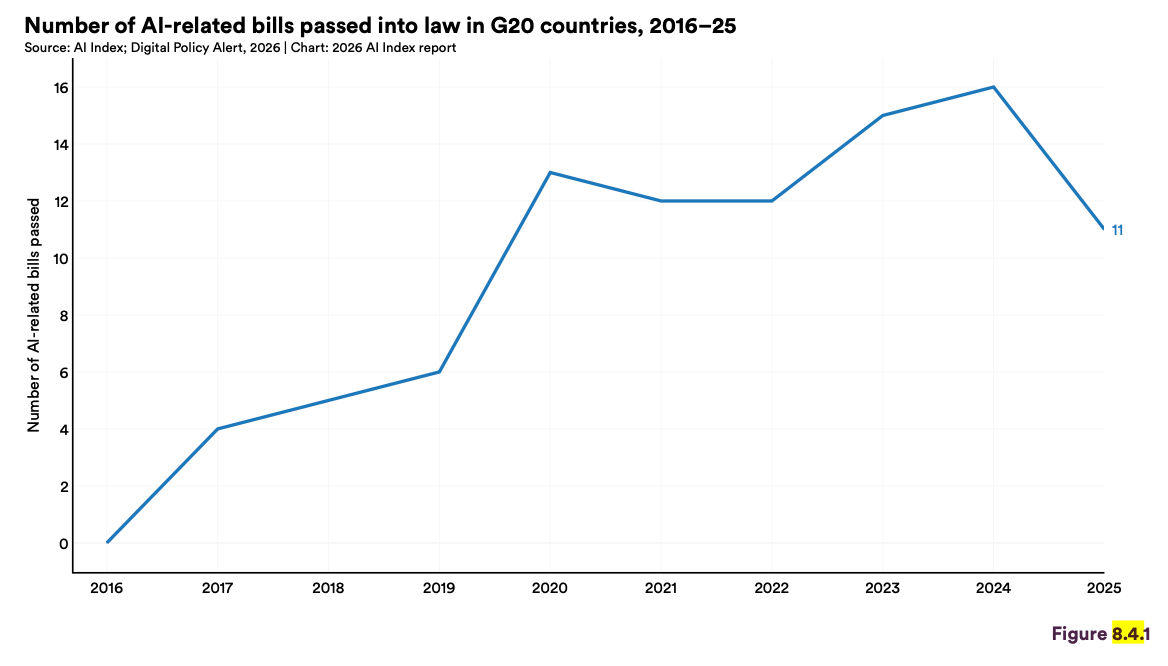

Governance stopped being theoretical in 2024. In 2025 it hardened into fragmentation.

The ten-day window in early 2025 is the cleanest illustration.

Three jurisdictions, three completely different theories of what the problem even is.

The US signed an executive order moving toward deregulation on January 23: AI is a strategic asset to be unleashed.

The EU AI Act’s first prohibitions took effect on February 2: it is a risk surface to be categorized and constrained.

China finalized its mandatory AI content labeling rules on March 14: it is a domain to be controlled and labeled.

True but sad story, you cannot have “global AI governance” across those three positions. You can only have local governance regimes that interact through trade, standards, and diplomacy. If that is going to be the operating reality for the next decade, the field needs to say so out loud, because most of the policy conversation is still assuming a convergence that is not coming.

The fragmentation deepened from there. Italy passed the first EU member state AI law. Japan, South Korea, Texas, and California SB 53 followed. In July, the US Senate struck a proposed 10-year federal moratorium on state AI regulation, and I would argue this was one of the most consequential US moves of 2025, because it opens the door to a 50-state patchwork rather than a federal baseline.

Underneath the geopolitical fragmentation, something quieter and in my view more important is happening at the organizational compliance layer. GDPR slipped from 65% to 60% as the most-cited regulatory influence. ISO/IEC 42001 appeared in the data for the first time at 36%. NIST AI RMF reached 33%.

This is the AI-native compliance stack finally emerging, and we are not giving it enough attention. Two years ago the honest answer to “which framework should we adopt?” was “GDPR plus improvisation.” is no longer true. ISO 42001 gives you a management system standard, NIST RMF gives you a risk typology, and the EU AI Act gives you risk categories and high-risk obligations. Anyone still treating AI governance as a principles document rather than a control chain will be on the wrong side of a procurement cycle soon. I am already seeing it in client work.

The organizing frame above all this is AI sovereignty. The WEF-Bain Rethinking AI Sovereignty paper I covered in Davos 2026 argued that for most countries the honest frame is strategic interdependence, not a full national stack. Only two countries have full-stack capability, and even the leader depends on a single Taiwanese foundry for its chips.

For Türkiye and the region, the real question is not whether to pursue sovereignty but which layer to anchor on (talent, data, application, governance) and which regulatory gravity to orbit. That is a decision about which rule-making ecosystem your companies will be speaking the language of for the next decade. Most of the conversation here is still stuck at “should we have sovereign AI,” when the real debate is about specific layers and alignments.

What I think should actually happen

In the register of my consulting work:

For boards and executives, stop treating “human oversight” as a checkbox. Define which of human-in-command, human-in-the-loop, or human-on-the-loop applies to each AI feature, and build the control chain from principle to metric to evidence behind it. The AI Index data tells you your vendors will not do this for you.

For policy professionals, ISO 42001 is the compliance signal to watch. It moved from nothing to top-tier citation in twelve months. The AI-native compliance stack is finally decoupling from GDPR, and anyone writing enterprise AI policy should be fluent in it by the end of this quarter.

For researchers and evaluators, the RAI benchmark reporting gap is fixable with the same social mechanism that made MMLU a shared standard. It is a coordination problem, not a technical one, and it needs a credible third-party institution to host the reporting and pressure-test the disclosure.

For founders and practitioners in this region, strategic interdependence is a real opportunity, but only if it gets paired with EU AI Act and ISO 42001 literacy in the next 24 months. Otherwise you become raw material for other people’s AI stacks.

For readers thinking about their own careers, the entry-level labor signal in software is the first clean one of this cycle. If you are early in an AI-exposed function, invest deliberately in the layers the productivity studies say current AI benefits least: judgment, systems thinking, and domain depth.

Closing

The co-chairs of the AI Index write that “the data does not point in a single direction.” I want to respectfully disagree. I think the data points very clearly in one direction. Capability and capital are accelerating. Evaluation, disclosure, labor protection, and institutional trust are not keeping pace. The ambiguity is not in the data. The ambiguity is in what we are willing to do about it.

In AI Safety 2025 I wrote that safety is a choice. In Enterprise AI’s Biggest Risk I wrote that governance is a chain from principle through control to evidence. In Davos 2026 I wrote that 2026 would feel less like new tools and more like new constraints. This year’s AI Index is the clearest numerical confirmation of all three I have seen, and also a quiet invitation to stop treating the adaptation gap as a second-order issue and start treating it as the main event.

See you next week,

Nesibe

💬 Let’s Connect:

🔗 LinkedIn: [linkedin.com/in/nesibe-kiris]

🐦 Twitter/X: [@nesibekiris]

📸 Instagram: [@nesibekiris]

🔔 New here? for weekly updates on AI governance, ethics, and policy! no hype, just what matters.

Data-driven and highly impactful conclusions