When an LLM Starts Thinking With Living Neurons

How a biocomputer with 200,000 living human neurons is quietly reshaping the future of AI, energy, and consciousness debates.

Hey everyone, I’m genuinely challenging myself to keep you updated. This is a lot of work: tracking news not daily but hourly, digging into background, sketching possible scenarios, assessing governance risks so you can see around corners, and then writing it all up here. Of course, I do lean on AI tools in the background to help me organise and clean up the flow, but every line you read is still my judgement and my voice: less machine, more us.

Over the next few weeks you’ll probably see some version of this headline in your feed:

“They hooked a live brain up to an LLM. 200,000 human neurons decide where the AI wants to go on vacation.”

The story is kind a real. A solo developer rented Cortical Labs’ CL1 “biocomputer”, wired it into a small language model, and let 200,000 lab‑grown human neurons nudge the model’s token choices in real time.

It feels like a Matrix moment. But it is not. I have always thought that what American scifiction showed us is actually what they are on it and trying to measure our attention on it. It is also a good moment to pause and ask what is actually going on, what is absolutely not happening, and why this line of work matters far beyond a viral demo.

What this hybrid LLM–biocomputer actually does

The setup is surprisingly simple.

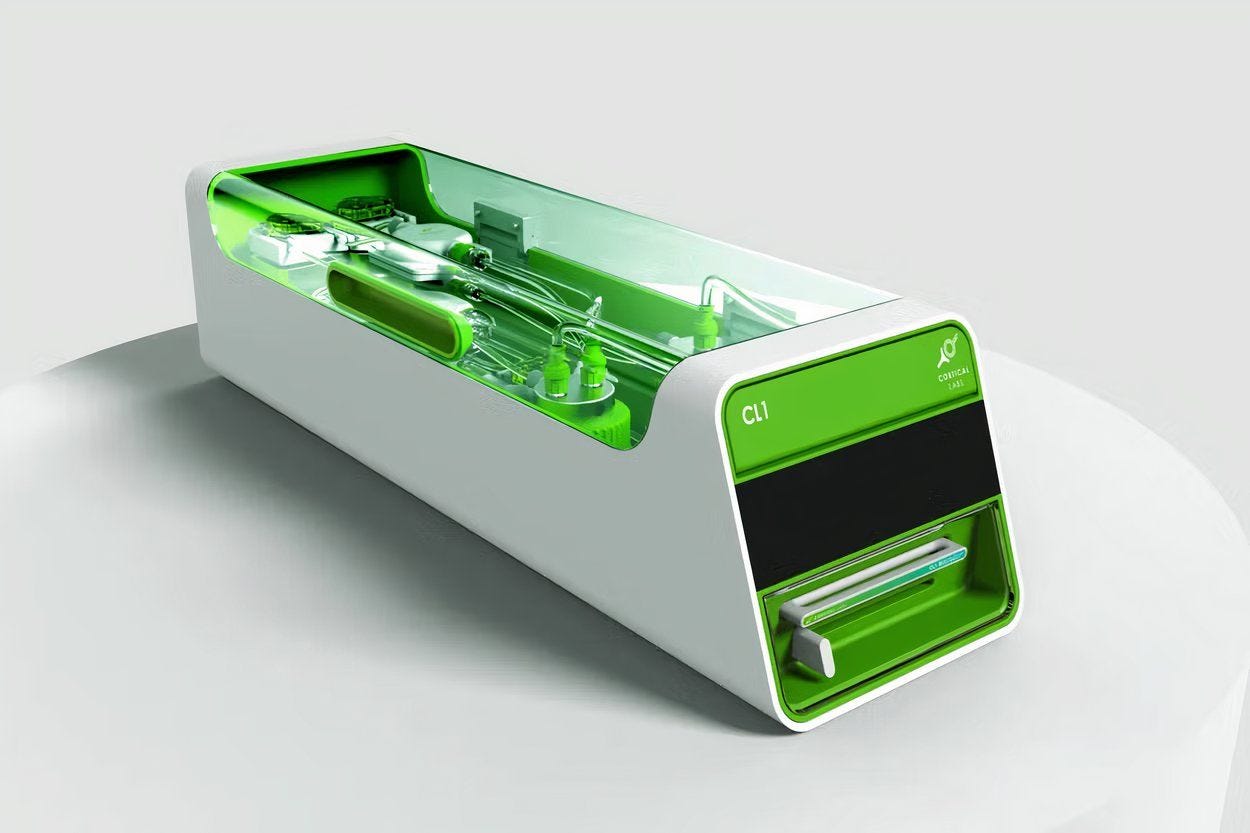

Hardware: Cortical Labs’ CL1 is a biological computer built around a microelectrode array with about 200,000 human neurons grown from stem cells. The neurons live in nutrient solution, fire electrical impulses, and form a small but real neural network in vitro.

Model: On the developer’s local machine, a relatively small 350M‑parameter language model runs as usual.

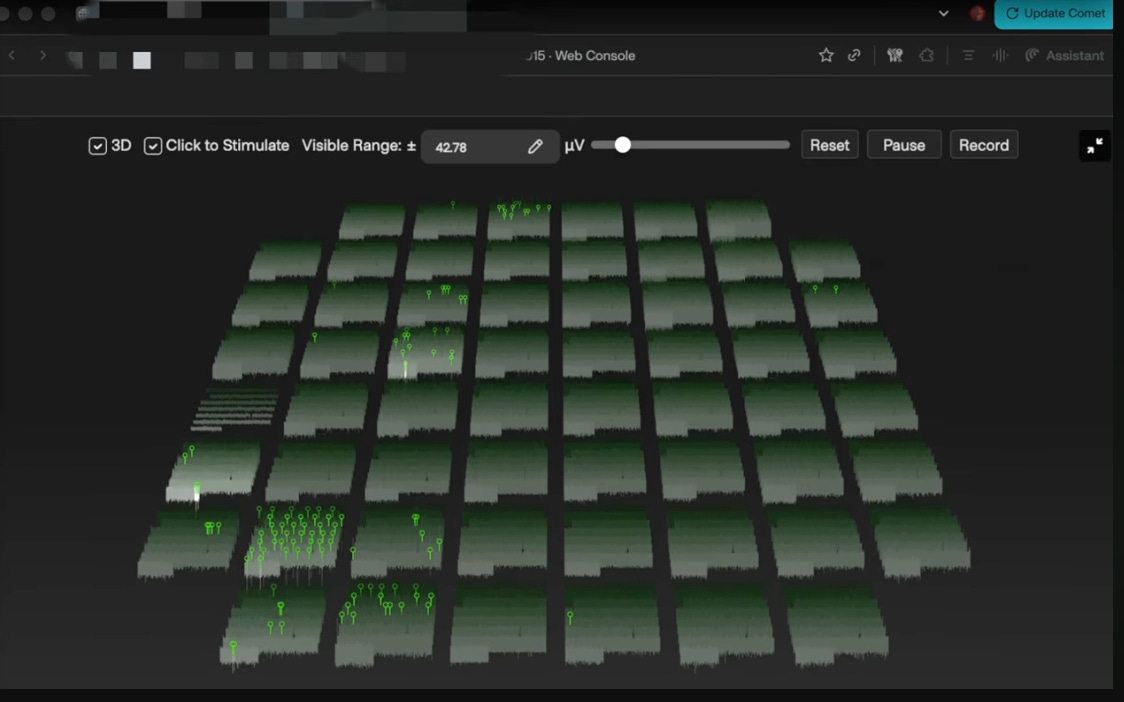

Bridge: A custom “encoder” turns model state or token candidates into stimulation patterns for CL1, then reads back the neurons’ activity and uses that signal to re‑weight the model’s token probabilities.

In plain language: the LLM proposes a next word, the neurons get a say, and sometimes they overrule it.

In his video, titled “BioLLM – I made an AI that ‘thinks’ with REAL neurons!”, the developer walks through the interface: you can literally watch which electrode channels were stimulated and which channels fired back when a particular letter or word was chosen. It is a primitive, very early bio‑feedback loop over token selection.

One conversation that circulated widely went like this:

User: “Where would you like to go on vacation?”

System: “Great Barrinchi Cove in the Maldives.”

That place does not exist. Classic hallucination.System then follows up with something real: Tuscany, Italy, for the hills and views.

In one run, the neurons overrode the model’s top‑probability token 19 times during a single conversation. In other words, this is not just a decorative oscilloscope on the side. The living tissue is actually altering what the model says.

The price tag: around 35,000 dollars for a CL1 unit, plus a cloud access model if you don’t want wetware on your own bench.

Is this “consciousness”? Almost certainly not.

The internet, predictably, jumped straight to: “Mini brain gained consciousness and is now dreaming of beaches.”

This tells us more about our anxieties than about the system.

A few basic numbers help:

A human brain has on the order of 80–90 billion neurons; the go‑to estimate is about 86 billion.

CL1 in this configuration has about 200,000 neurons.

That is not a mini‑brain. It is closer to a tiny neighbourhood in a huge city.

On top of that:

There is no full brain structure, no layered architecture resembling cortex.

There is no body, no sensory organs, no closed sensorimotor loop in the world.

There is no obvious way to talk about stable self‑experience or phenomenology at this scale.

Organoid and organ‑on‑chip researchers have been explicit about this. The Johns Hopkins group that coined “organoid intelligence” in 2023 is careful: current systems are far below any plausible threshold for consciousness, but they still insist we need ethics and governance in place now because the field is moving fast.

So what are these neurons doing, functionally?

A good mental model is:

They provide a biological noise / bias layer on top of the LLM’s probability distribution.

They slightly push and pull the model’s token choices, but they are not an “agent” that secretly wants to go on vacation.

“200,000 neurons are dreaming of a beach” is a great metaphor. It is not an accurate technical description, and it is not a stable ethical foundation.

What we are really looking at is an early, somewhat fragile hybrid architecture where living tissue perturbs a small language model’s behaviour. That alone is historically interesting, without needing to claim that the dish has opinions about Tuscany.

A very short history: from Pong, to Doom, to token selection

This experiment did not come out of nowhere.

DishBrain and Pong (2022)

Cortical Labs’ first splash was DishBrain: roughly 800,000 neurons in a dish, grown on a microelectrode array, learning to play Pong in real time. The peer‑reviewed paper in Neuron is titled “In vitro neurons learn and exhibit sentience when embodied in a simulated game‑world”.

The provocative “sentience” wording drove a lot of attention and critique, but the experiment was serious neuroscience: an embodied neural culture adjusting its activity to minimise prediction error in a simple game environment, framed with Karl Friston’s free energy principle.CL1: a commercial biological computer (2025)

In March 2025, Cortical Labs launched CL1, a packaged biocomputer you can buy for about 35,000 dollars or access via cloud. It runs their biOS operating system and lets researchers interact with the neurons via an API. A few months later we started seeing external demos, including videos of Doom running on CL1.BioLLM and token selection (2026)

The current BioLLM demo is an independent developer renting CL1 over Cortical Cloud, wiring it into a small LLM and exposing the whole thing on YouTube and GitHub. It is not a polished product. It is more interesting because it shows what a motivated individual can do once biological compute becomes API‑accessible.

Pong → Doom → “help me pick the next token” is not a straight line to AGI. But it is a clear trajectory: from “neurons can do something goal‑directed in a toy world” to “neurons can sit inside a modern AI stack and modulate its outputs.”

The bigger question: what happens when you scale this?

The important questions start one step beyond the hype.

If this is possible with 200,000 neurons and a hobbyist‑scale model, then in principle:

What happens at 20 million neurons?

What happens at 2 billion?

At those scales, the analogy to “a small neighbourhood in a giant city” starts to break down. We would be closer to organ‑level structures and possibly more interesting dynamics. That does not automatically mean consciousness. But the more complex the system, the less satisfying “it’s just a dish of cells” becomes as a moral argument.

Organoid scientists are already worried about this. A 2025 STAT News piece documented how brain organoid pioneers fear a backlash as biocomputing pushes organoids beyond medical research into commercial AI infrastructure. They are right to be nervous: public perception, funding, and regulation can turn on a single emotive case.

There is also a safety angle we barely understand. If you add a partially opaque biological component into an AI system, you are introducing:

new sources of unpredictability

new failure modes

and potentially new forms of harm, if these systems ever cross a threshold where suffering becomes a live possibility.

We don’t know where that threshold is. That ignorance is not an argument to stop the research, but it is a strong argument to treat organoid‑based AI as a frontier safety topic, not a curiosity.

Organoid intelligence: when energy becomes the main character

So far I’ve focused on the weirdness: living neurons picking words.

There is another, quieter story in the background that might matter even more: energy.

Over the last two years, data‑centre and energy reseachers have started to sound the alarm. Large AI workloads are pushing power demand into the gigawatt range. McKinsey and others estimate AI data centres will require major grid upgrades, and the “AI vs climate” conversation is heating up accordingly.

At the same time, a different community is pointing at something we tend to forget:

The human brain runs on roughly 20 watts – about a small light bulb.

Doing anything like its workload in silicon looks like megawatts to gigawatts, not kilowatts.

In early 2023, a group led by Thomas Hartung and Lena Smirnova at Johns Hopkins published what has effectively become the manifesto for this line of thinking: “Organoid intelligence (OI): The new frontier in biocomputing and intelligence‑in‑a‑dish.”

That paper does three important things:

It names the field: Organoid Intelligence, treating brain organoids as a potential computing substrate, not just disease models.

It argues that organoids could combine sample‑efficient learning, real‑time adaptation and extreme energy efficiency into a new class of “wetware” computers.

It insists on ethics and governance from day one, precisely because we may end up with systems that blur lines between tool and subject.

More recently, an overview titled “Brain Organoid Computing – an Overview” gathered the emerging evidence into one place: why organoids might be attractive for computing, what the current limitations are, and how energy fits into this picture.

The energy argument is not hand‑wavy philosophy. In Frontiers in Artificial Intelligence, Stiefel and Coggan work through the energy challenges of artificial superintelligence and conclude that, under realistic assumptions, a brute‑force silicon ASI would hit hard physical energy limits. They compare brain and chip efficiencies and find that biological brains can be on the order of hundreds of millions of times more energy‑efficient per operation.

In parallel, companies like FinalSpark are building commercial platforms that look a lot like CL1 but with their own twist: 160,000 neurons across 16 organoids, accessible via the internet, marketed explicitly as low‑energy biocomputers for AI. Scientific American profiled them under the very direct title “These Living Computers Are Made from Human Neurons.”

If you zoom out, you get an interesting picture:

On one side, we scale up GPU clusters, push power grids to their limits, and worry about sustainability.

On the other, we start renting small clumps of human neurons over an API because they might help us compute more with less energy.

At that point, the question is no longer just “Who will build AGI first?” It quietly becomes:

If this thing ever works at scale, what kind of physical substrate will it live on – endless GPU farms, or some uncomfortable hybrid where silicon and living neurons share the load?

Recommended reading and watching

If you want to go deeper (and sanity‑check the hype), these are the key pieces I’d recommend, in roughly the order I’d read/watch them:

DishBrain: Pong‑playing neurons

Kagan et al., In vitro neurons learn and exhibit sentience when embodied in a simulated game‑world, Neuron (2022).

Monash and UCL press releases give a very accessible overview of what the experiment did and didn’t show.

Why it matters: this is the foundational experiment showing that a 2D neural culture on a chip can adapt its activity in a structured virtual environment.

Organoid Intelligence manifesto

Smirnova et al., Organoid intelligence (OI): the new frontier in biocomputing and intelligence‑in‑a‑dish, Frontiers in Science (2023).

Johns Hopkins’ explainer on OI and the first Organoid Intelligence workshop.

Why it matters: this is the conceptual framework everyone else now cites. It connects brain organoids, AI, energy efficiency and ethics in one coherent picture.

Energy limits of silicon‑only AI

Stiefel & Coggan, The energy challenges of artificial superintelligence, Frontiers in Artificial Intelligence (2023).

“A Hard Energy Use Limit of Artificial Superintelligence” preprint for more technical detail.

Why it matters: if you want to argue that we may eventually need biological or neuromorphic compute for energy reasons, this is the cleanest place to start.

Biocomputers made from human brain cells

Cortical Labs’ own CL1 page and talks.

Reuters’ short video on CL1 as “a computer that runs on living human brain cells.”

FinalSpark’s Neuroplatform and the SciAm feature “These Living Computers Are Made from Human Neurons.”

Why it matters: this is where biocomputing ceases to be a thought experiment and becomes a commercial product line.

Brain Organoid Computing overview

Talavera & Ulmann, Brain Organoid Computing – an Overview (arXiv, 2025).

Why it matters: best single technical overview of brain‑organoid computing, including energy, learning, limitations and open questions.

BioLLM: the CL1‑LLM demo

YouTube: “BioLLM – I made an AI that ‘thinks’ with REAL neurons!” by 4R7I5T (Garrett).

GitHub:

4R7I5T/CL1_LLM_Encoder(when it’s not rate‑limited).

Why it matters: this is the specific hybrid LLM + CL1 experiment that sparked the “vacation in the Maldives” headlines. It’s rough, honest, and a useful reality check against the hype.

Living computers and public imagination

National Geographic: “Scientists want to build ‘living’ computers—powered by live brain cells.”

STAT News: “Brain organoid scientists worried by push into biocomputing.”

BBC and Firstpost segments on organoid‑based computers and the ethics of “wetware”.

Why it matters: these pieces show how quickly the narrative jumps from niche research to “mini brains in jars running AI,” and how scientists themselves are trying to steer that story.

We are still at the Pong and “vacation in the Maldives” stage. It is tempting to laugh, scroll on, and file this under “weird AI side quests.”

But if you care about AI governance, energy, and the boundary between tool and subject, this strange little CL1 + LLM prototype is an early signal. Not that AGI is here, but that the hardware question is about to get much more complicated.

And at some point soon, “Which model is better?” will sound like the wrong question, compared to:

“What kind of matter do we want our intelligence to run on?”

💬 What’s your take?

Let’s talk in the comments. The hype moved on, but the lesson remains.

🔗 LinkedIn: linkedin.com/in/nesibe-kiris

🐦 Twitter/X: @nesibekiris

📸 Instagram: @nesibekiris

🔔 New here? Subscribe for weekly updates on AI governance, ethics, and policy. No hype, just what matters.

Wow! I love this article so much!

The Johns Hopkins group insisting on ethics from day one is the most important detail in this whole piece. What does governance even look like for a computing substrate that might one day have interests of its own?