What the Anthropic Pentagon Conflict Reveals About Choosing AI Vendors Responsibly

AI governance, vendor risk, and why ‘all lawful purposes’ is not enough

Hi everyone,

I was not planning to publish this weekend. But Friday happened, and by Friday evening I had received more messages than after any previous TechLetter issue. Some from colleagues in governance, some from readers who had been following the Anthropic-Pentagon tension all week, some from people who simply wanted to understand what just took place and what it means.

At first glance, this may look like a story that only concerns American citizens and US defence policy. It is not. What we are really watching is how the world’s most powerful AI companies respond when their principles are tested by the world’s most powerful government. The vendor that serves the Pentagon also serves your insurance company, your university, your municipal government. The policies they hold or abandon under Washington’s pressure are the same policies that govern how your data is handled, how your inputs are logged, and what guardrails sit between your users and the model. Today it is autonomous weapons and mass surveillance. Tomorrow it could be your sector, your jurisdiction, your data. How these companies behave now tells us everything about how they will behave when the pressure comes from somewhere closer to home.

Let’s get into it.

The Company That Was Built to Say No

In 2020, a group of senior researchers left OpenAI. They were led by Dario and Daniela Amodei, siblings who had helped build GPT-2 and GPT-3 and who had grown uncomfortable with a lab that was scaling capabilities faster than safety, treating governance as friction rather than function.

They founded Anthropic in 2021 with a specific thesis: safety not as a feature but as a founding constraint. They introduced Constitutional AI, embedding inspectable principles into the model itself. They built Claude followed months later after extended internal testing, while OpenAI released ChatGPT in late 2022.

What followed was a governance trail unlike anything else in the industry.

Their Responsible Scaling Policy, now in its third version, is one of the few frameworks that ties deployment decisions to measurable capability thresholds rather than vague promises.

Their work on election-related risks, published before the 2024 cycle, showed a willingness to act proactively rather than reactively.

Their red teaming disclosures openly discuss the challenges and limitations of the process, which most labs treat as a closed box.

The AI Safety Level 3 activation was, to my knowledge, the first time a frontier lab publicly announced moving to a higher internal security posture based on capability evaluations.

Their transparency note on frontier AI made a concrete case for why the public deserves to know how these systems are tested.

Their compliance framework for California's Frontier AI Act arrived before the law required it.

I appreciate that Anthropic stress-tests its models in scenarios like blackmail and corporate sabotage. It signals a company investing not only in what a model can do but also in what it is willing to refuse.

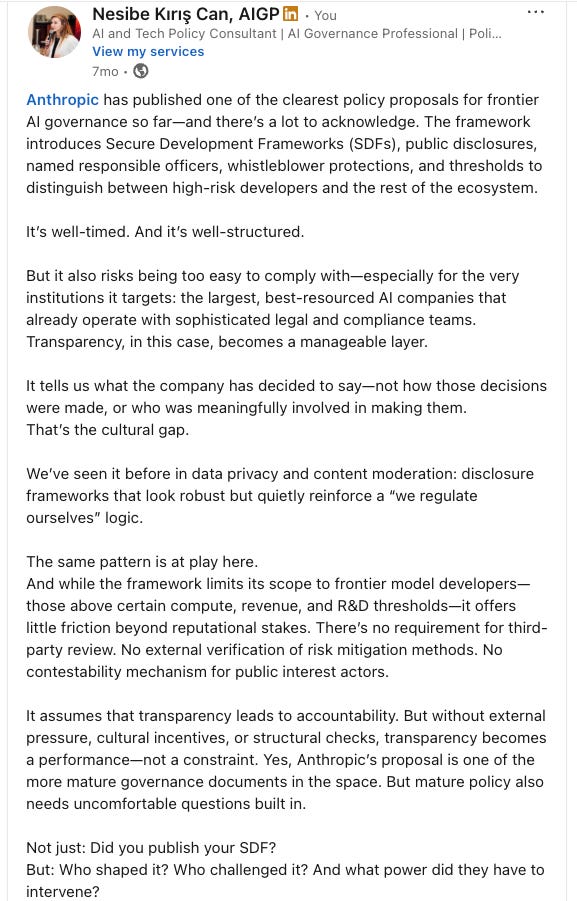

But every one of those documents was self-designed, self-published, and self-enforced. No independent body audits Anthropic’s compliance with its own scaling policy. I have been thinking about this tension for a while. When Anthropic published its Secure Development Frameworks proposal last year, I wrote on LinkedIn that it was well-timed and well-structured, but that it also risked being too easy to comply with, especially for the very labs it targets

The questions I asked then still apply: not just “did you publish your policy?” but who shaped it, who challenged it, and what power did they have to intervene?

None of this makes Anthropic perfect. But it does make the company meaningfully different from competitors whose governance consists of a safety page and a press release. And that difference matters if you are choosing AI tools for a bank, a hospital, or a school district, because the origin story is the first layer of due diligence.

Anthropic vs the Pentagon: What Really Happened

In July 2025, the Pentagon awarded contracts worth up to $200 million each to Anthropic, OpenAI, Google DeepMind, and xAI. Anthropic was the first cleared for classified networks.

This week, negotiations broke down over scope. The Pentagon demanded the right to use Claude “for all lawful purposes.” Anthropic did not only push for lawful-use requirements; it pushed for extra protections against domestic surveillance and fully autonomous weapons. That is exactly where things collapsed. The alternative reportedly on the table was simple: “anything except what is unlawful or unsuited to cloud deployments.” Anthropic said no.

Dario Amodei went public on February 26. On autonomous weapons: frontier AI models are not reliable enough to power systems that select and engage targets without human oversight. He offered the Pentagon direct R&D collaboration. They declined. On surveillance: powerful AI can aggregate individually innocuous data into comprehensive profiles that existing law was never written to address.

I take Amodei’s writing seriously because his risk map, autonomy, malicious actors, entrenched authoritarianism, economic shock, and unknown unknowns, just found a very concrete stage in this dispute.

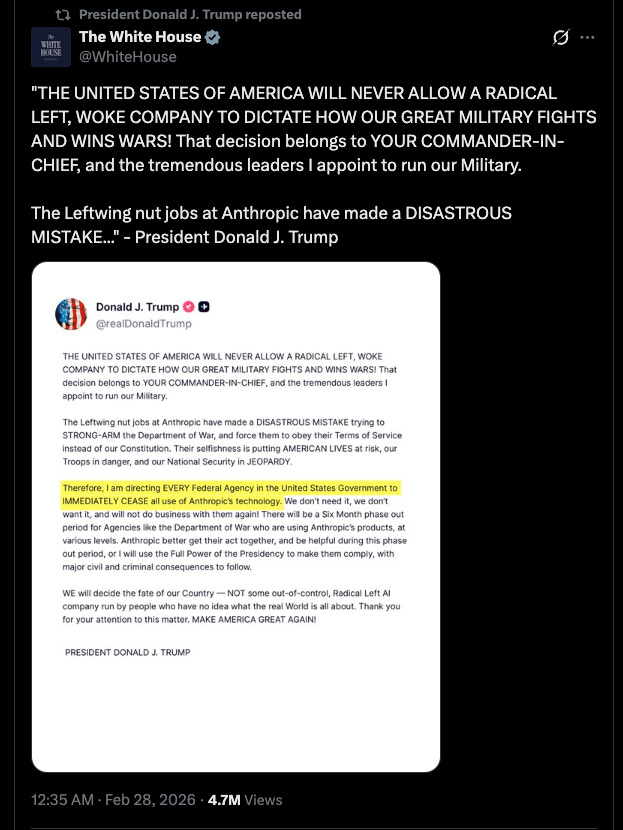

On February 27, Trump ordered every federal agency to stop using Anthropic. Defence Secretary Pete Hegseth designated it a “supply chain risk to national security,” a label normally reserved for foreign adversaries.

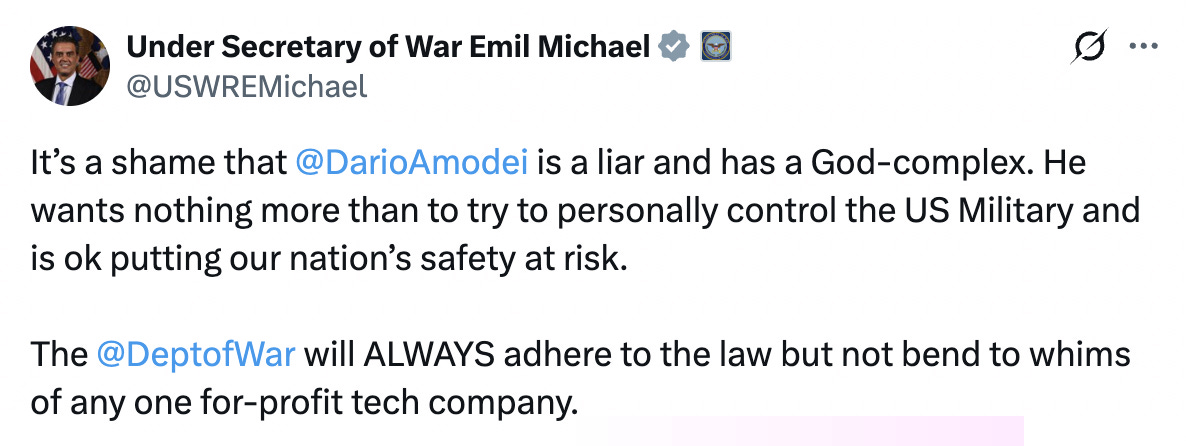

Pentagon CTO Emil Michael called Amodei a “liar” with a “God complex.

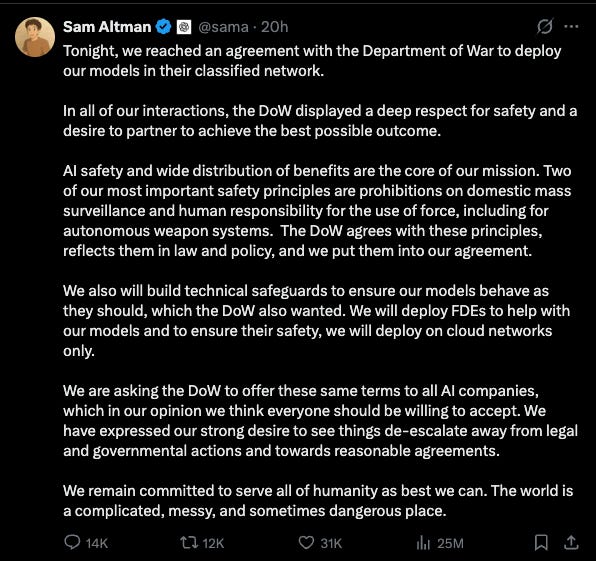

Then came a move worth reading carefully. OpenAI posted publicly:

On the surface, this looks like solidarity. A competitor defending a competitor on principle. But look at what the statement actually does.

It objects to the punishment while accepting the reward. OpenAI opposes the “supply chain risk” label but does not question the underlying dynamic: that a company was blacklisted for holding ethical red lines, and that OpenAI signed the deal the same day.

The tweet answers a question nobody was asking, “should Anthropic be labelled a security threat?”, while avoiding the one everyone is: why did you move in the moment your competitor was pushed out?

Here is what happened next:

Hours later, OpenAI CEO Sam Altman announced a deal with the Department of War to deploy ChatGPT on classified networks.

Altman said OpenAI shares Anthropic’s “red lines.”

The Pentagon agreed to OpenAI’s terms, apparently similar in substance but different in mechanism.

Anthropic announced it would challenge the supply-chain designation in court.

You can read this conflict in a simple way: Anthropic walked away from having its models used in mass surveillance and fully autonomous weapons. OpenAI stayed at the table under the same political weather.

Now, this was a defence contract. Most readers of this newsletter are not negotiating military AI deals. But consider the pattern, not the setting. A vendor’s terms of use were tested by a powerful client. When your most important client pushes back on a guardrail, does the vendor hold or fold?

Letter vs Spirit of the Law in AI Governance

In this dispute you can see the classic letter-versus-spirit divide.

OpenAI is comfortable with rigid legal definitions baked into the agreement.

Anthropic insists on staying in the loop to judge whether concrete uses actually honour the spirit of its principles.

OpenAI offered to build a “safety stack” of technical controls:: filters built into the model, access restrictions, monitoring mechanisms, layers of software designed to prevent misuse at the technical level.

Anthropic wanted something different: the contractual authority to refuse use cases that cross ethical lines the law has not yet drawn.

If your governance standard is “all lawful purposes,” you are delegating ethics to a legal code that everyone agrees has not yet caught up with frontier AI.

“We will follow the law” also means “we will follow the gaps in the law.”

When an insurer uses AI to aggregate health data, browsing history, and purchasing patterns to deny coverage, it might be technically lawful in many jurisdictions today.

When an HR platform uses AI to screen out candidates based on patterns that correlate with disability or pregnancy, the model may not violate any statute on the books.

“All lawful purposes” gives vendors permission to enable these uses until a legislature catches up. And legislatures, as we have all seen, are years behind.

Now place that alongside OpenAI’s policy trail:

January 10, 2024: OpenAI’s usage policies still banned “military and warfare” use.

January 12, 2024: The ban was gone. No blog post. A changelog note.

2024-2025: Pentagon partnerships began.

February 27, 2026: OpenAI signed a classified-networks deal hours after Anthropic was blacklisted.

OpenAI’s own Charter promises to avoid enabling uses of AI that harm humanity or unduly concentrate power. At minimum, that makes its silence over Anthropic’s treatment very hard to defend.

A policy that changes overnight, without explanation, is not a governance framework. It is a positioning statement. And if your organisation relies on a vendor whose commitments can shift that quietly, you are carrying a risk that no security audit will surface.

Two Bets, Two Philosophies of AI Safety

Most coverage has missed a deeper layer.

OpenAI’s approach to model behaviour looks like a detailed rulebook.

Anthropic’s looks like a virtue framework. One tells the model what to do in each case; the other tries to shape what kind of agent it becomes.

Anthropic’s model spec does not just list dos and don’ts; it tries to encode a philosophical and ethical framework and then bets that a sufficiently capable model can reason its way through ambiguity and moral complexity.

Anthropic is making two simultaneous bets: a philosophical bet on a particular conception of virtue, and a technical bet that deep learning can instill that virtue robustly in a neural network.

Whether or not you share Anthropic’s exact values, the mere fact that a major lab walked away from a nine-figure contract over surveillance and autonomy should update how seriously we take its governance story.

The most interesting philosophy of AI is happening in San Francisco offices and late-night strategy calls between labs and governments.

For practitioners, the distinction between these two approaches is not theoretical.

A rulebook-based model will do anything its rules do not explicitly forbid.

A virtue-based model is designed to reason about intent and context.

When you deploy AI in a healthcare setting, a financial advisory workflow, or a child-facing education product, the difference between “not explicitly prohibited” and “inconsistent with our principles” is the difference between a tool that enables harm by omission and one that has at leastc some capacity to resist.

Anthropic Is Not a Moral Exception

Critics are right about one thing: Anthropic is becoming more like OpenAI every day in some dimensions. Racing on capabilities. Reliant on billions from Google, Amazon, Nvidia, and Microsoft. Operating closed models under real commercial strain. Just days before the Pentagon crisis, Anthropic announced a loosening of its own Responsible Scaling Policy, acknowledging that its stricter safety approach had failed to persuade competitors.

I have argued that Anthropic is drifting from pure safety rhetoric toward a conventional corporate posture. That critique has weight. A company that raises billions in big-tech funding, competes on benchmarks and market share, and relaxes safety commitments when the competitive heat rises does not get to claim permanent moral exceptionalism.

And yet, in this episode, Anthropic behaved like a company that still believes some deals are worse than no deal.

My goal here is not to replace uncritical OpenAI loyalty with uncritical Anthropic loyalty. The Pentagon episode matters not because Anthropic is above criticism, but because it provides a rare, concrete data point about institutional character when the cost is real. Walking away from every federal contract in the United States is meaningful. But the concern I raised about Anthropic’s own Secure Development Frameworks has not gone away: without external pressure or structural checks, even the most impressive governance archive in the industry remains something the company can adjust when incentives shift.

If the principle becomes “build something important enough and the state gets to set your terms,” we have created an incentive structure that punishes exactly the kind of vendor behaviour we claim to want.

What This Crisis Teaches Us About AI Ethics and Governance

This story is about the Pentagon. But the lessons belong to every sector that depends on AI.

Law is not the same as ethics, and the gap is where real harm lives. All lawful purposes' is not a ceiling. It is a floor with holes in it. There is no US federal statute that specifically addresses AI-enabled mass profiling from commercially available data. There is no comprehensive federal privacy law. Most employment discrimination statutes were written decades before algorithmic screening existed. When a vendor says “we comply with all applicable laws,” they are telling you their floor, not their ceiling.

Self-regulation has real limits, even from the best actors. I described Anthropic’s governance trail earlier and I meant every word of praise. But it remains voluntary. The Responsible Scaling Policy is a self-imposed constraint that the company can, and did, loosen when the market demanded it. Boards and regulators cannot outsource their ethical obligations to vendor blog posts, no matter how thoughtful those posts are.

Ethics is risk management, not just values marketing. Treating governance as a cost centre or a PR exercise misses the point entirely. Consider what happened this week through a risk lens:

Litigation risk: Organisations that deployed AI tools later found to enable surveillance or discrimination will face lawsuits. The vendor’s terms of service at the time of deployment become exhibit A.

Reputational risk: When a vendor’s policies shift and that shift becomes public, as OpenAI’s did, every client associated with that vendor inherits the reputational fallout.

Regulatory risk: The EU AI Act, state-level AI laws in the US, and sector-specific rules in healthcare and finance are all tightening. A vendor whose policies are designed to stay one step ahead of regulation is a different risk profile from one that scrambles to comply after the fact.

Systemic risk: If your AI vendor is designated a supply-chain risk overnight, as Anthropic was, your operations, integrations, and data flows are directly affected. Vendor governance is operational resilience.

The difference between “values marketing” and enforceable governance is structural. A beautiful safety page with no independent review and no contractual red lines is branding. A versioned policy archive that survives contact with the most powerful government on earth is something closer to governance. Neither is perfect. But the distance between them is measurable and it should inform procurement.

Why AI Tool Policies Are Everyone’s Business

The scenario I described in “The AI You’ll Never See” played out in real time this week: the most consequential governance decisions happening behind closed doors, on classified networks, invisible until a negotiation collapses. The pattern I wrote about in “Enterprise AI’s Biggest Risk” shows up again: the persistent gap between how fast organisations deploy AI and how slowly their governance matures.

The Davos 2026 shift from experimentation to institutionalisation, where boards suddenly woke up to structural risk, found its clearest test case this week. And it arrived too late for the institutions involved.

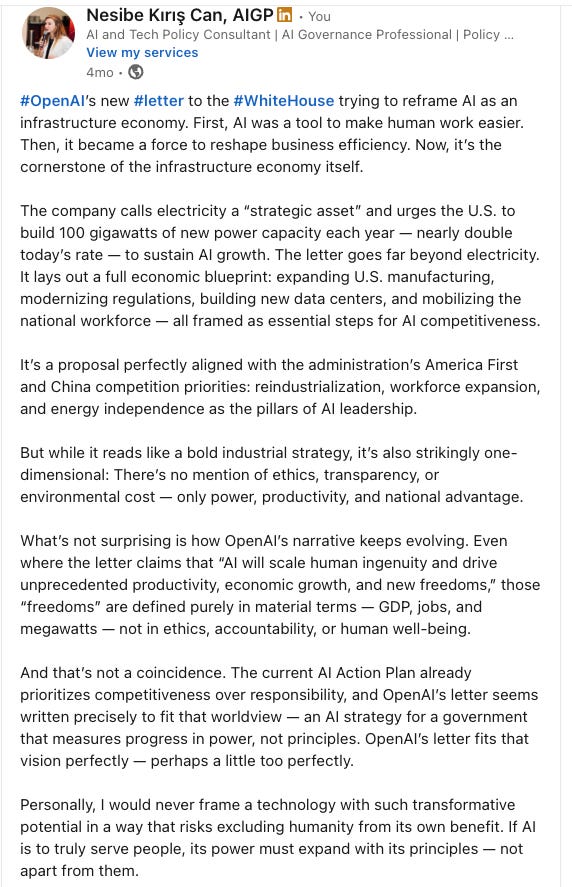

In my analysis of OpenAI’s “infrastructure economy” letter, I noted that the company defines AI progress entirely in material terms: GDP, jobs, megawatts. No ethics, no transparency, no environmental cost.

The Pentagon deal follows the same framing. And in America’s AI Action Plan, I wrote that governance becomes opt-in under the current administration. I called it “transparency as branding.” This week, branding met pressure.

To me, as always stated, we can no longer evaluate AI tools on model quality alone; we have to account for the ethical values and political pressures that shape these systems. That is exactly my position. And it applies whether you are a defence ministry or a mid-sized company choosing between ChatGPT, Claude, and Gemini for your customer service team.

How I Choose AI Vendors in 2026

As an AI governance consultant, I evaluate vendors on institutional behaviour, not benchmarks. The questions I bring to every assessment are the ones this week made unavoidable.

I have argued for restricting ChatGPT use in organisations I advise. Not because the models are inferior, but because the governance signals do not add up: a military-use ban that vanished without explanation, data practices that remain opaque, and a pattern of adjusting commitments to fit the room.

I do not outsource my ethics to any single vendor. But the difference between a company that absorbs the loss of every US federal contract rather than drop two red lines, and a company that quietly deletes a military ban and signs a Pentagon deal the same day its competitor is blacklisted, is a difference worth naming.

Where This Leaves Us

Dario Amodei, asked on Friday night if he had a message for the President, said: “Everything we have done has been for the sake of this country. Disagreeing with the government is the most American thing in the world.”

Whether this blows over or escalates, the precedent is set. We now know which company held, which company stayed at the table, and which company saw an opportunity.

The lesson is not “pick Anthropic” or “avoid OpenAI.” The lesson is that AI governance, ethics, and vendor integrity are now operational questions. They affect your data, your liability, your regulatory exposure, and your ability to serve your own customers and citizens responsibly.

If your vendor’s red lines can be quietly rewritten in a changelog, they are not red lines. They are marketing copy.

The question for every reader this week is not which AI model scores highest on a benchmark. It is which vendor’s governance would survive the same test, and whether you have done enough due diligence to know the answer before the moment arrives.

💬 What’s your take?

Let’s talk in the comments. The hype moved on, but the lesson remains.

🔗 LinkedIn: linkedin.com/in/nesibe-kiris

🐦 Twitter/X: @nesibekiris

📸 Instagram: @nesibekiris

🔔 New here? Subscribe for weekly updates on AI governance, ethics, and policy. No hype, just what matters.

This framing of “letter vs spirit” in AI governance really stood out to me. The deeper issue seems structural: if procurement systems reward the most permissive vendor, then ethical red lines become a competitive disadvantage rather than a standard.

I’ve been thinking about whether we need new institutional forms around AI development—something between a startup, research lab, and public-interest institution—to handle exactly these pressures.

Curious whether you think the problem is mainly vendor governance, or whether it’s actually the market structure around frontier AI that pushes companies toward the “all lawful purposes” logic.

1. Elite actors present the conflict as if the public benefit were self-evident.

2. But the public may end up carrying consequences those actors do not bear in the same way.

3. In a competitive world, outside conditions are not gentle or arranged for our comfort.

4. So morally framed decisions should still be tested against the real-world exposure of the people who would have to live with the result.