The EU AI Act's August Deadline Is Gone. Here Is Why and What It Actually Means

The EU AI Act Just Bought Itself More Time. Whether That Time Gets Used Well Is a Different Question.

Hello everyone. I have to admit, I am a little excited writing this one.

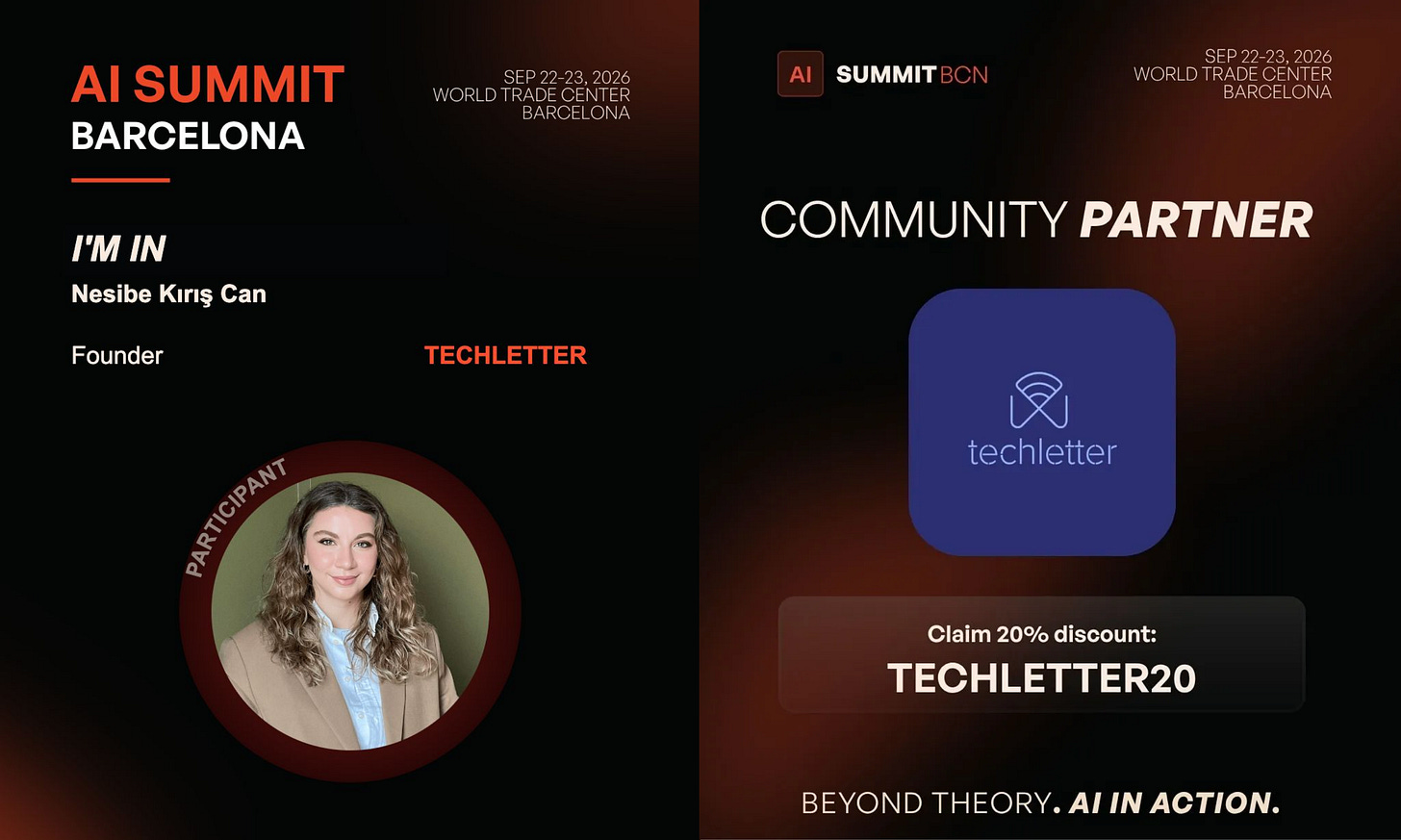

Some of you have been here since the early days of TechLetter, when it was just me, a Substack page, and a stubborn belief that AI governance was worth writing about every single week. So when I tell you that TechLetter is now an official Community Partner of AI Summit Barcelona 2026, I want you to know what that means to me. More on that at the end, including a small surprise.

But first, something happened in Brussels last week that I have been wanting to write about properly. While everyone in the EU AI compliance world was scrambling to get something ready before August, a different kind of news arrived. On 7 May 2026, in the early hours of the morning, after overnight negotiations, EU co-legislators reached a provisional political agreement on the Digital Omnibus on AI, a bill that amends the EU AI Act and moves its most consequential deadlines. The industry exhaled. Civil society filed its objections. And I have been sitting with the question of whether this is good news or a slow-motion problem we will revisit in 2027.

Probably both. Let me explain.

I have been following this file for months. And my honest read is this: the Digital Omnibus on AI is not a rewrite of Europe’s AI rules. It is an acknowledgment that the infrastructure needed to implement those rules was not ready. That is an important distinction, and most of the coverage has blurred it.

Before the Omnibus, I shared an EU AI Act Cheat Sheet as a reference. Now that the deal is done, I have also put together an updated one-pager reflecting the new compliance map, end of this issue.

Why August 2026 Was Already in Trouble

The EU AI Act was adopted in 2024. Its logic was sound: classify AI systems by risk, require high-risk categories to demonstrate conformity against harmonised technical standards, have notified bodies verify that conformity. By 2 August 2026, Annex III high-risk systems, think hiring tools, credit scoring, biometric identification, would need to comply.

The problem is that the compliance infrastructure the Act relied on did not arrive on schedule. Harmonised technical standards were formally recorded as significantly delayed. The Commission then missed its own statutory deadline for issuing Article 6 classification guidance, which was due in February 2026. Notified body capacity remained limited across member states.

In practice, companies preparing for August 2026 were being asked to build conformity architecture against standards that did not yet fully exist. The legal exposure of getting it wrong was real. The cost of preparing against a moving target was real. And Brussels knew it.

This is the honest reason the Digital Omnibus on AI bill exists. The competitiveness argument and industry pressure were present, yes. But the structural problem was created, at least partly, inside the EU’s own implementation timeline. That context matters for how you read everything that came next.

What Collapsed the April Trilogue

The first two trilogue sessions under the Cypriot Presidency did not resolve everything. The second session, on 28 April 2026, ended without agreement after roughly twelve hours of negotiation. A press conference was cancelled. The question at that point was whether a deal could be reached before August at all.

What broke the session was not the deadline extension itself. That was broadly agreed across the institutions. The file that collapsed everything was the conformity assessment architecture for AI systems embedded in products already governed by EU sectoral safety law: industrial machinery, medical devices, toys, lifts, watercraft.

Parliament’s position, backed by Germany, was to move Annex I products toward primarily sectoral handling. The rationale was real: requiring companies to run parallel conformity assessments under both the AI Act and existing product safety law was disproportionate and, in some cases, technically incoherent. The Council resisted reopening the structural architecture of the Act. Under trilogue logic, nothing is agreed until everything is agreed. So the deadline extension, the new prohibited practices, everything else sat waiting on this one unresolved file.

The third trilogue opened the evening of 6 May and closed just before 5 in the morning on 7 May. The political negotiation is over. Formal endorsement and publication in the Official Journal still need to happen, but the outcome is settled.

What the Digital Omnibus Bill Actually Does

The deal is a compromise, and the details matter more than the headline.

On timelines:

Stand-alone high-risk AI systems under Annex III, the category that covers hiring tools, credit scoring, biometric identification and critical infrastructure management, now apply from 2 December 2027, pushed from 2 August 2026

AI embedded in regulated products under Annex I, think machinery, medical devices, toys, lifts, watercraft, applies from 2 August 2028

Crucially, these dates are now fixed. They no longer move with standards availability. The political argument for delay has been used once, and the institutions signalled clearly that it will not be used again.

On the Annex I architecture:

The Machinery Regulation receives a direct carve-out: AI within that regulation is exempted from direct AI Act applicability, with health and safety requirements added instead through delegated acts under the Machinery Regulation itself

For other Annex I sectors, the Commission can limit AI Act application through implementing acts where sectoral law already covers equivalent AI-specific requirements

The Commission is also obligated to issue guidance for sectoral operators to minimise compliance overlap

What was strengthened, not weakened:

AI systems generating non-consensual sexual or intimate content, including child sexual abuse material, are now explicitly prohibited under a new Article 5 provision, capturing nudification apps specifically

Database registration for AI systems considered exempted from high-risk classification, a transparency safeguard that allows regulators and the public to see what is out there, was reinstated after the Commission’s proposal to delete it was rejected

What did not move:

All Article 5 prohibited practices, the hard bans on things like social scoring and real-time biometric surveillance in public spaces, remain intact

Article 50(1) obligations, which require providers to disclose when users are interacting with an AI system, remain on track for 2 August 2026

Article 50(2) watermarking, the requirement to label AI-generated images, audio and video as synthetic still applies from 2 August 2026, but the Omnibus adds a transitional period: providers with systems already on the market by then have until 2 December 2026 to comply.

GPAI obligations, the rules covering large general-purpose models like the ones powering most AI products today, have been in force since 2 August 2025 and are unaffected

If you are shipping generative AI into the EU, watermarking is your nearest live engineering deadline. Roughly seven months away.

The Debate Was Substantive, and Some of It Was Not Heard

The deal was contested, and the contestation was substantive.

On the civil society side,

60 organisations including EDRi sent a joint letter specifically urging co-legislators to reject the proposed deletion of Article 49(2), a transparency safeguard requiring providers who self-assess their Annex III systems as not high-risk to register that exemption in the public EU database.Their argument was straightforward: weakening it would reduce enforcement visibility while offering negligible benefits to companies.

The Delors Centre raised a harder procedural critique: the Digital Omnibus bill was advanced through an accelerated procedure with limited public consultation and no comprehensive impact assessment, on provisions that had taken years to negotiate in the original AI Act trilogue.

On the industry side, the response was more qualified than expected. The Machinery Regulation carve-out was welcomed, but several legal observers noted that the Annex I compromise shifts a significant amount of interpretive work to the Commission’s implementing acts. In other words, the uncertainty does not disappear with the political agreement. It moves to a later stage.

For me, the most analytically important issue is the grandfathering effect. AI systems deployed before the new compliance deadlines are exempt from many obligations that systems deployed after them must meet. A 16-month delay is not administratively neutral. It shapes which systems get built, deployed and normalised during that window, and under what accountability conditions. That is most consequential in exactly the domains where ungoverned AI has the most documented human cost: hiring decisions, credit assessments, biometric identification.

The EU bought time from a structural problem it helped create. The question that matters now is whether that time gets used to build the standards, guidance and supervisory capacity that make December 2027 real, or whether it becomes a second runway to a second renegotiation.

What This Means in Practice

Three things follow practically from this deal.

If you are building or deploying AI in the EU:

Watermarking is your nearest deadline. UI labelling, machine-readable metadata, and detection capability for any generative AI shipped into the EU needs to be production-ready by 2 December 2026. That is roughly seven months of engineering time, not planning time.

Treat 2 December 2027 and 2 August 2028 as hard dates. They no longer shift with standards availability, and there is no credible political path to a third postponement.

Do not treat the delay as permission to pause your compliance program. The standards, codes of practice and interpretive guidance that the whole architecture depends on will continue arriving through 2027. The organisations that build governance infrastructure now will be distinctly better positioned than those that wait for perfect clarity.

If you operate in machinery, medical devices, toys, lifts or watercraft:

You are now navigating a dual-track framework: the AI Act and the implementing acts the Commission will adopt to define the interplay with your sectoral law. Track both from now, not from when the guidance arrives.

One thing worth noting on timing: the provisional political agreement is not yet law. It still requires formal endorsement by Council and Parliament, legal-linguistic revision, and publication in the Official Journal.

Until that happens, EU AI Act in its original form remains the legally binding text.

As I wrote in the cheat sheet: delay extends the runway. Whether companies use that runway well depends less on Brussels handing over more time, and more on Brussels handing over clearer rules.

Regulation is only as meaningful as its implementation, and implementation happens in product teams and compliance functions, not in Brussels. That gap is what I want to spend time on in Barcelona.

I will be in AI Summit Barcelona!

Okay, here is something I am genuinely excited about.

TechLetter is an official Community Partner of AI Summit Barcelona 2026, and I will be there in person on 22-23 September.

Frontier AI leaders, builders, researchers and governance practitioners, all in one place in Barcelona for a full week of events. I will be attending stages, doing speaker interviews, and sending everything back to you as proper analysis, not a conference recap.

The speaker list is still growing, so I will share more as it takes shape.

But if there is someone you want me to talk to, or a question you have been sitting with about European AI, regulation, deployment in practice, anything, write it in the comments or hit reply.

I am building my interview list now and I want it to reflect what this community actually wants to understand.

And the small surprise I mentioned at the top: TechLetter readers get 20% off with the code TECHLETTER20 at aisummitbarcelona.com. Early bird pricing is live now.

See you in Barcelona.

Nesibe

Disclosure: TechLetter is an official Community Partner of AI Summit Barcelona 2026.

💬 Let’s Connect:

🔗 LinkedIn: [linkedin.com/in/nesibe-kiris]

🐦 Twitter/X: [@nesibekiris]

📸 Instagram: [@nesibekiris]

🔔 New here? for weekly updates on AI governance, ethics, and policy! no hype, just what matters.

It was bound to happen. Either way I'm not sure if 2027 is a feasible calendar date as well.